The Great AI Dilemma

The time for careful consideration of AI regulation options is now. In a few years, it could be too late | Edition #280

A few weeks ago, I wrote that, from a legal perspective, 2026 would be a make-or-break year for AI.

The regulatory Wild West we are currently living in cannot possibly persist without chaos reigning and people realizing that they are fully unprotected from the negative consequences of AI development and deployment.

We are still in March, but it has already become clear that this is the year in which democratically elected authorities must take bold decisions on AI.

-

An important factor that has led us to where we are now is that the risk profile and the social impact of AI have been clearly underestimated over the past 10-15 years of AI policy debates.

They did not take into account the level of acceleration and the widespread AI development and deployment we have been observing over the past three years.

As a consequence, even laws enacted between 2022 and 2024, including the EU AI Act, are already outdated and ineffective in addressing threats and harms that became more evident and widespread in the past few months.

The growing cases of AI-related suicide, mental health harm, emotional manipulation, political manipulation, misinformation, scams, security vulnerabilities, synthetic content flooding, copyright infringement claims, competition abuse, AI washing, layoffs, institutional instability, low preparedness, global disparities, and other issues illustrate how AI’s impact has gotten out of control in a relatively short period of time.

At the same time, most regulatory authorities seem to have been paralyzed and afraid to take bold decisions over the last three years.

They fear that regulatory action could stifle innovation and delay productivity gains (arguments often raised in the EU) or make them “lose the AI race” and reward adversaries (arguments usually raised in the U.S.).

This is evident from what is happening in the EU.

The EU AI Act was enacted in August 2024. A year and a half later, even before it was fully enforceable, the Digital Omnibus proposal aims to postpone, amend, and dilute it.

This is the same bloc that took the lead in 2016 by enacting the GDPR and prioritizing fundamental rights. It is 2026, and the bloc seems to be no longer sure of its stance (or hesitant to stand by it).

This is also happening in other countries that have chosen to take a “wait-and-see” approach to AI, as well as those that have explicitly stated that prioritizing innovation is the main goal.

The problem is that inaction has a price.

Given the pace of acceleration and techno-social change, doing nothing today might mean that, in a year or two, it could already be too late. It may no longer be possible to reverse the structural harm.

We might have to live in some sort of globally heterogeneous, AI-powered ‘AI-first’ reality that idealizes AI and endorses AI companies’ interests and profit-making routes, but no longer serves our human interests, rights, and needs.

To tell you the truth, I think this is exactly what AI companies want and are indirectly pushing for, but they do not say it explicitly.

Ongoing AI hype, ultra-optimistic predictions about all-disease-curing AGIs in one decade, alien-like intelligence, and strange policy-like discussions about an AI model’s self-awareness and “wellbeing“ serve to thicken the smoke cloud and distract us from serious policy and regulatory discussions.

When authorities remain paralyzed and do nothing about what is negatively affecting people (or how most people want AI to be governed), AI companies will step in and solve things their way.

As companies (and not democratically elected officials), they will necessarily solve things in ways that keep them alive longer and maximize their profits more effectively, including prioritizing AI, treating it as a special entity, an essential need, an “alien,” and a commodity that must be constantly incentivized and multiplied.

They will create and endorse whatever narrative keeps the profits coming, even if the narrative is “you should probably implant a Neuralink-like AI chip to have maximum intelligence and productivity, better understand how AI systems reason and flourish together.”

Sadly, I am not joking, and I have seen these types of arguments circulating online.

Many forget this and get lost in companies’ beautifully PR-tailored, legally filtered narratives, but that is how companies work.

They are not public utility entities, and their priority is not to reflect democratic values, promote due process, or spread well-being. They need growing profits to survive, or they die. They will do everything to survive.

As I wrote above, we are still in March, but it seems that AI’s make-or-break legal moment is approaching.

In the U.S., the recent face-off between Anthropic and the U.S. Department of War seems to have exposed an internal split.

The federal government wants to use AI for all lawful purposes, including in cases in which existing laws might not reflect AI model capabilities or emerging risks, such as those involving internal surveillance and autonomous weapons.

Many Americans did not like it and supported Anthropic’s firm stance and internal redlines (and now the lawsuit against the Department of War).

This is a great opportunity for the U.S. to engage in complex yet necessary debates about AI regulation for both civil and military use, as well as serious discussions on AI safety.

In the EU, the Digital Omnibus proposal has been a wake-up call for all sectors to face the legal, economic, and political challenges shaping the future of Europe.

Does the EU still want to lead in the protection of fundamental rights and the prioritization of trustworthy AI? What are the potential measures that truly incentivize innovation while also preserving European legal, ethical, and cultural traditions?

Authorities worldwide should face the techno-social reality and make meaningful, coherent, and effective decisions.

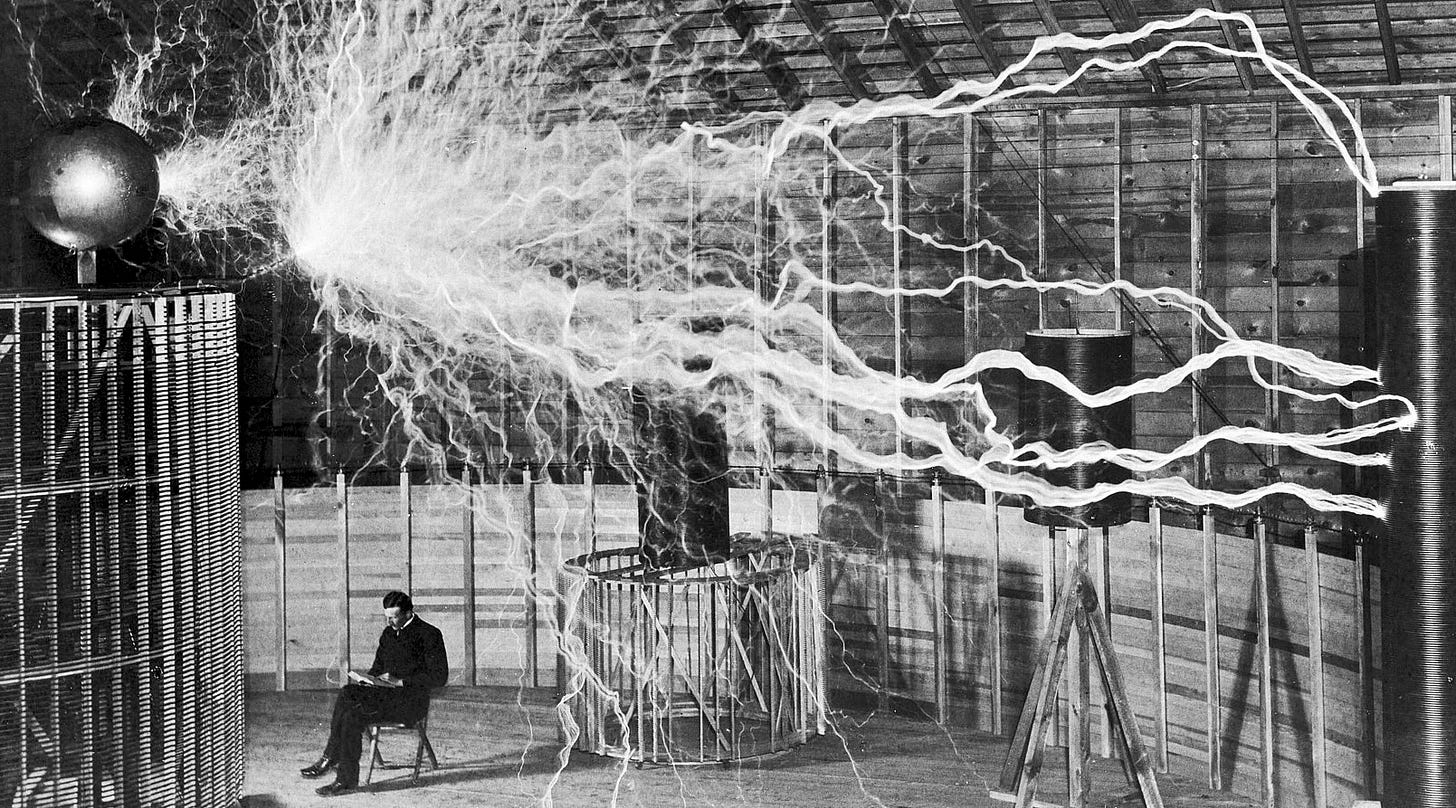

Should powerful AI models be regulated similarly to atomic bombs, in the sense that their safety risk is too high to be entrusted to private companies, but which military bodies would compete to have strategic access?

Should access to AI systems be treated as a “right to augmented cognition,” be subsidized, and offered for free to people in an attempt to reduce inequality and increase national productivity?

Should there be strict rules for AI training and model development, including government-sponsored benchmarks, standards, and official limits, as well as close scrutiny of companies’ AI safety decisions?

Etc.

There are many other options, but no easy choices. Countries, individually and collectively, should be debating them transparently and democratically.

Doing nothing should not be an option, as in a few years, it could be too late.

Wherever you are, I invite you to make your voice heard.

As the old internet dies, polluted by low-quality AI-generated content, you can always find pioneering, human-made thought leadership here. Thank you for helping me make this newsletter a leading publication in the field.

Check out our sponsor: Codacy

AI helps your developers ship fast, but it also scales risk. Codacy brings Code Quality, Security, and AI Coding Standards together in one unified platform. Build secure, compliant, and maintainable software without slowing down. Learn more about Codacy.

This is a powerful framing of the dilemma. What strikes me most is that the real challenge may not be AI itself, but the architecture of incentives surrounding it.

Every transformative technology ends up amplifying the intentions of the systems that deploy it—economic, political, or social. AI just accelerates that dynamic dramatically.

The question I keep coming back to is, what kind of infrastructure do we need so human intent remains the guiding force rather than becoming a byproduct of algorithmic optimization?

If we design systems that only optimize engagement, profit, or speed, AI will simply magnify those signals. But if we build systems that help people clarify and act on their genuine intentions, AI could become a tool for alignment rather than distortion.

In other words, the dilemma may not just be about regulating AI but about redesigning the digital environments in which human decisions are formed.

The reason AI governance keeps failing isn't complicated, in fact its simply that the countries and companies with the most power to build it have the least incentive to constrain it, money and strategic advantage consistently win over accountability.

We've seen this before btw.. as i've been researching nuclear weapons governance and the parallels to AI are uncomfortable to say the least as the nations that built the weapons were the same ones who spent decades blocking binding international oversight because it threatened their dominance. Meaningful governance only arrived after the world nearly ended in 1962. Twenty-three years after the first bomb!

We don't have 23 years this time.

My research paper documents what went wrong with nuclear governance and what AI governance needs to learn from it before catastrophe forces the lesson.

Bridging the Wisdom Gap: Learning from Nuclear Weapons Governance to Address the AI Crisis.

https://dx.doi.org/10.2139/ssrn.6174559

More on this in my 14 Days of Uncomfortable Truths series:

https://aimirrorandmez.substack.com/p/14-days-of-uncomfortable-truths-in