Anthropic vs. U.S. Department of War

The challenge of regulating, governing, and scrutinizing the use of AI for national defense and military purposes | Edition #277

Over the past 48 hours, we saw a dramatic dispute between Anthropic and the U.S. Department of War unfold, which culminated in the company’s designation as a “supply chain risk” and OpenAI becoming the preferred supplier.

I have read some commentators praising Dario Amodei’s ethical stance and others criticizing the strategic opportunism behind OpenAI’s actions.

What most people have not realized is that the high visibility of this quarrel is a symptom of a much broader issue: the challenge of regulating, governing, and scrutinizing the use of AI for national defense and military contexts.

-

Let me start by breaking down what happened in this specific case, based on what Anthropic and OpenAI made public.

According to Anthropic's blog post from a few days ago, the company has worked proactively to deploy its models to the Department of War (previously the Department of Defense), and Dario Amodei stated that he “believes deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat their autocratic adversaries.”

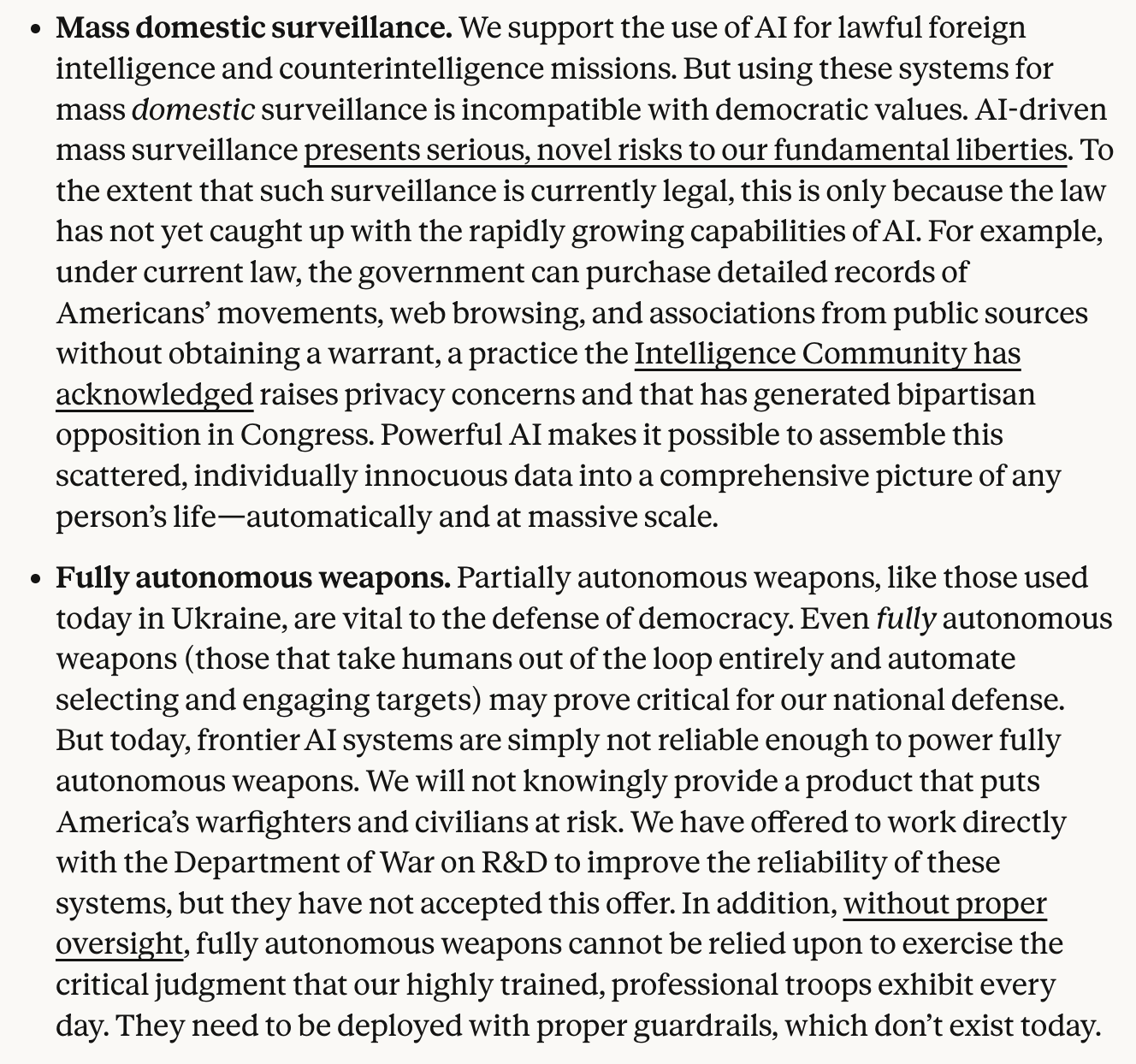

Dario also said that he is against including the following AI use cases in the company's contract with the Department of War:

The main reasons he lists for why the company is against these forms of AI deployment are:

These use cases would undermine, rather than defend, democratic values;

These use cases are outside of the bounds of what today’s technology can safely and reliably do.

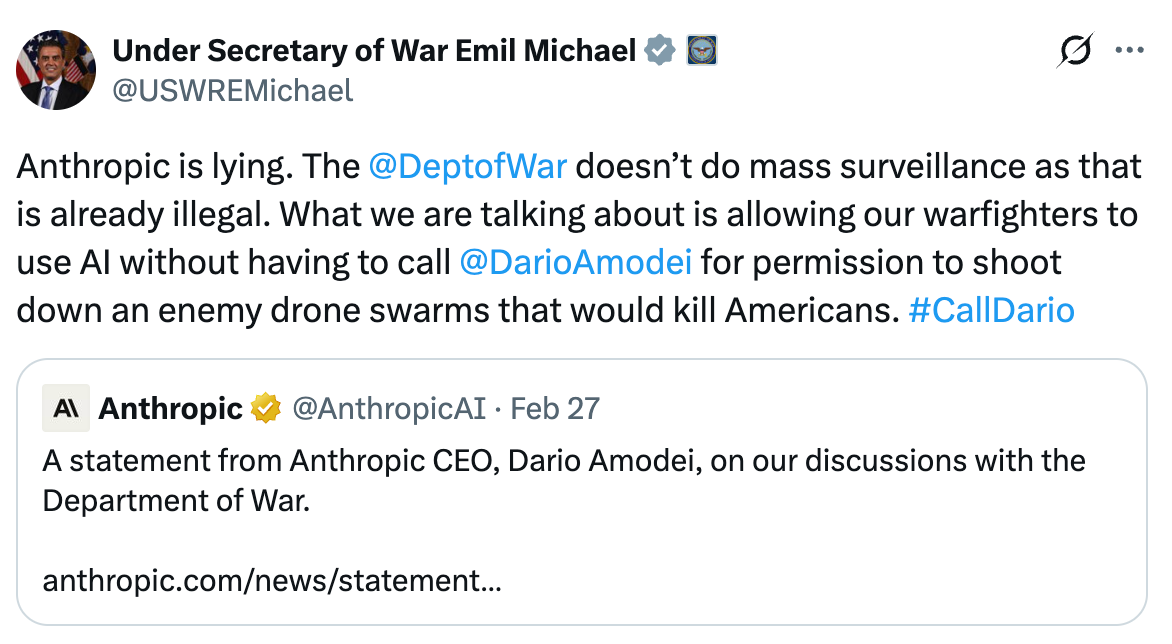

Emil Michael, the “Under Secretary of War,” quoted Anthropic’s blog post, saying that the company wanted the final word over military uses of AI:

Peter Hegseth, the Secretary of War, followed suit and posted, among other accusations:

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.”

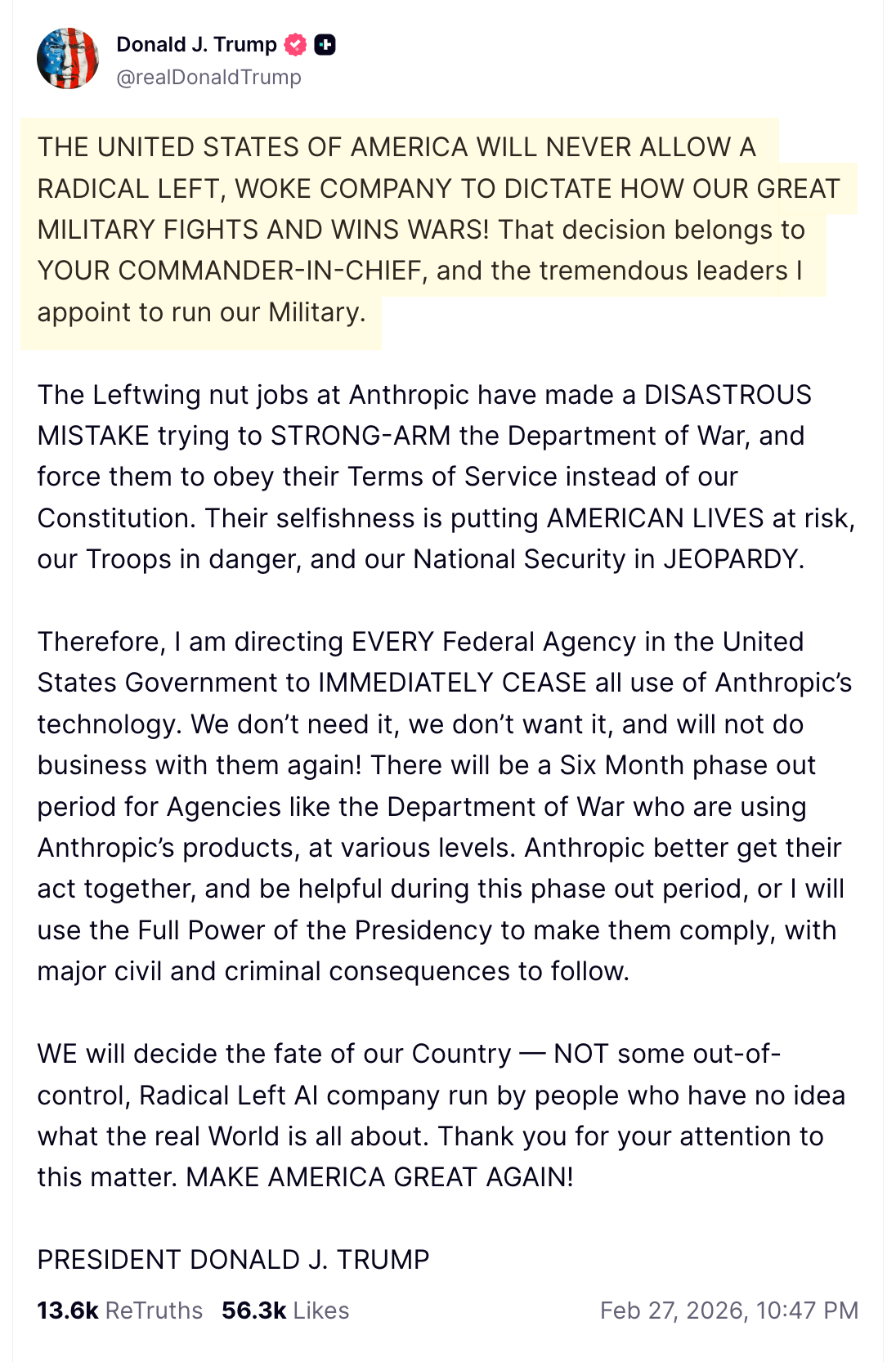

Lastly, Trump posted about Anthropic:

Many, including Anthropic itself, were surprised by the Department of War's public reactions and the company's "supply chain risk" designation.

For those who were closely following the Trump Administration’s latest moves on AI, however, the decision was more predictable.

Last July, Trump signed an executive order titled "Preventing Woke AI in the Federal Government," and the implementation section includes:

"(v) make exceptions as appropriate for the use of LLMs in national security systems."

Also, America's AI Action Plan (published last July as well), which contains the country's strategy to win the AI race, states:

"As our global competitors race to exploit these technologies, it is a national security imperative for the United States to achieve and maintain unquestioned and unchallenged global technological dominance."

So Anthropic agreed to work with the U.S. military, but its legal team did not seem to take Trump’s actual AI implementation plan seriously (or it miscalculated it, thinking that the U.S. government would accept that the company would still have the final word on its desired red lines).

After the controversy became public, Dario gave a 30-minute interview to CBS News. The interviewer wisely asked him:

“Why do you think that it is better for Anthropic, a private company, to have more say in how AI is used in the military than the Pentagon itself?”

Dario did not answer that question directly, but what he implied throughout the conversation was that he does not think that U.S. law has caught up with current AI capabilities and risks, including for military use.

In his opinion, Anthropic's red lines are better for America (and more aligned with America's values) than what U.S. law currently establishes, as he clarifies in this quote:

“We believe in defeating our autocratic adversaries. We believe in defending America. The red lines we have drawn we drew because we believe that crossing those red lines is contrary to American values. And we wanted to stand up for American values.”

Most people commenting on the topic have not realized it, but Dario's stance exposes a core AI governance challenge when national defense and military purposes are involved: