General Purpose AI Will Never Be Safe

Authorities should require AI companies to 1) limit the purposes of their AI systems; 2) respect sector-specific rules; 3) be held accountable for the harm their AI systems cause | Edition #267

I have written several times in this newsletter that financial advice, psychotherapy, and storytelling for kids should never be offered as a single product or service.

This is intuitive for most people, and in addition to the social, cultural, and ethical reasons why it is true, there is also an important legal basis:

Financial advice, psychotherapy, and storytelling products or services will be used by different groups of people in different ways, present different risk profiles, and are governed by different sets of legal rules.

However, despite the existing consensus on the impracticality and potential unlawfulness of coupling financial advice, psychotherapy, storytelling, and other areas into a single product, general-purpose AI systems, starting with ChatGPT in November 2022, broke this rule.

Today, you can use general-purpose AI chatbots like ChatGPT, Gemini, Claude, and other competitors to obtain financial advice, psychotherapy, storytelling, and numerous other domains.

These various domains have little to nothing in common and would never be offered as a single physical product or service in an offline context.

Most regulatory authorities have not realized it yet (and hopefully this edition will offer more information and awareness about the topic), but this is a major obstacle to effective AI regulation and AI chatbot safety, and it must change.

When the same general-purpose AI chatbot is being used by:

a man looking for intimate companionship,

a teenage girl secretly asking about drugs and sex,

a person with severe mental health issues looking for psychological support,

There will never be a single set of rules that will provide adequate service, protect the people involved, and address the context-specific risks.

When rules are inadequate for a product or service’s risk profile, people remain unprotected, and cases of harm happen more often (as we have observed over the past three years in AI, especially in the context of mental health harm and suicide).

Also, insufficient rules help AI companies avoid accountability for the harms their systems cause. They will argue that it is too difficult or "technically unfeasible" to prevent harm (another argument we have heard multiple times in the past three years).

-

The only solution to properly regulate AI chatbots - and I know many in AI will get angry with what I am saying, as every time I propose stricter AI rules, I am personally attacked - is to require AI companies to provide purpose-specific AI chatbots.

It is a big statement, but authorities and policymakers must realize that general-purpose AI chatbots will never be properly regulated, for the reasons I described above.

Authorities should require AI companies to define the target audience and use cases for their AI systems (including chatbots). The risk profile should be clear, as well as specific standards, technical rules, filters, and built-in guardrails.

An AI company that wants to offer an AI system providing medical advice services, for example, should be required to certify or hold a legally recognized credential to demonstrate compliance with all applicable laws governing this sector, including product safety, data protection, consumer protection, and other laws.

Note that I am not opposed to general-purpose AI models (such as LLMs). As I discuss in my AI Governance Training, models differ from systems, and different rules apply to providers of models and providers of AI systems (if you have questions about the differences between these two terms, I recommend reading the EU AI Act’s definitions: items 63 and 66 here).

An LLM can power a purpose-specific AI chatbot, and LLMs have important use cases and should continue to be developed. Also, many places in the world have rules for AI models, and these rules must continue to evolve to address the emerging challenges.

I am talking here about AI systems, the user-facing applications, the ones that will be subject to product-specific rules.

-

If you think that what I wrote above is too radical, or that it does not reflect the real world, yesterday’s public argument between Elon Musk and Sam Altman accurately highlights the regulatory challenge I described above:

Leaving their personal fight aside, without using the regulatory framing, Sam Altman is admitting that general-purpose AI systems like ChatGPT are too difficult to regulate.

They serve too many groups of people with diverse interests and vulnerabilities, across too many use cases, posing a too broad range of risks.

As the title of today's newsletter says, general-purpose AI systems will never be safe, and regulatory authorities and policymakers must understand the risks and take the necessary action before it is too late.

The public must also make clear that they will not accept having their loved ones exposed to unsafe AI systems and that they demand accountability from AI companies.

As the old internet dies, polluted by low-quality AI-generated content, you can always find pioneering, human-made thought leadership here. Thank you for helping me make this newsletter a leading publication in the field.

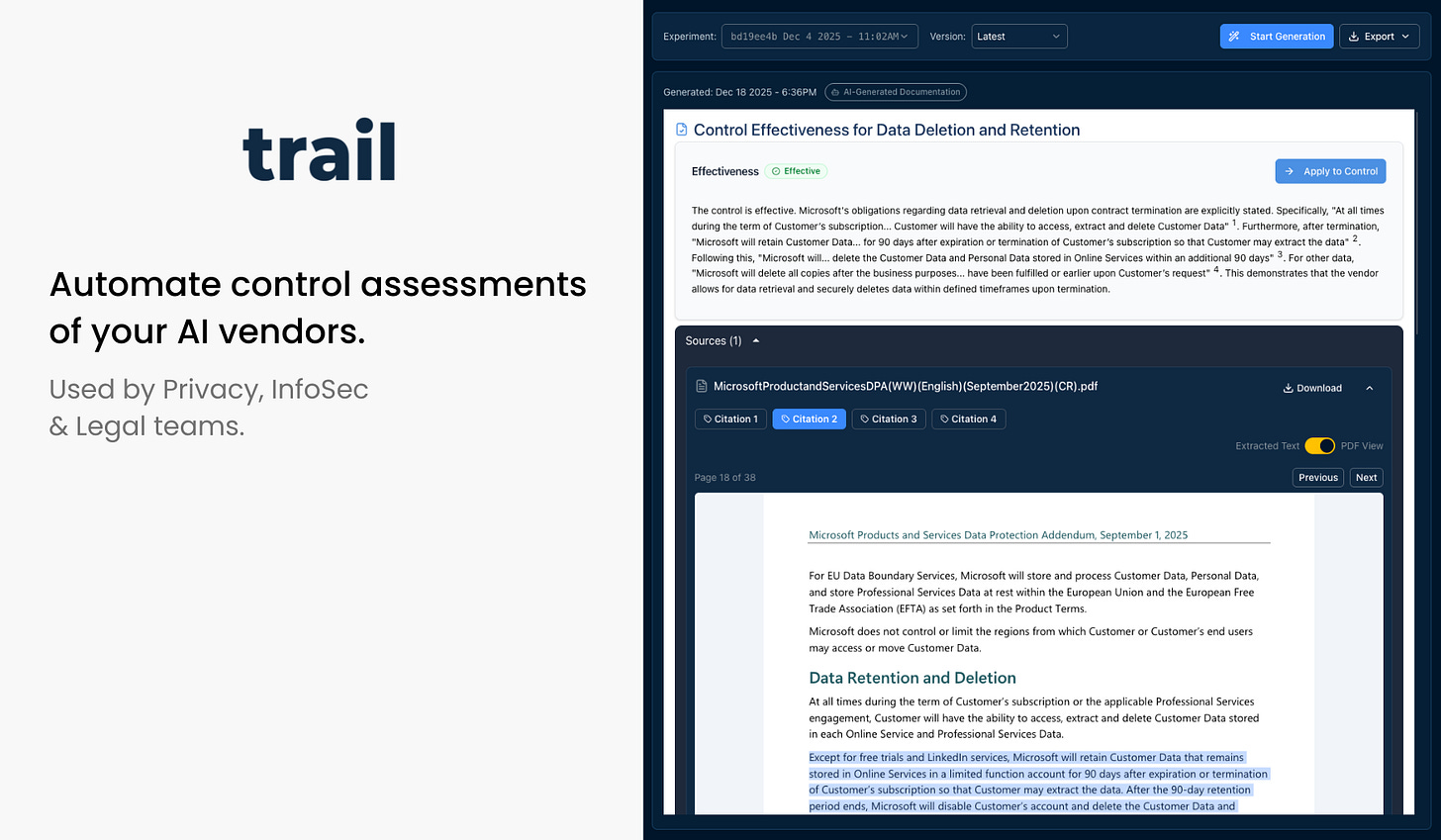

Check out our sponsor: Trail

Automate vendor assessments with Trail’s AI. The platform scans any file type to assess control implementation and compliance requirements, replacing manual review with automated processes. Request your free demo now.

it isn't safe

it is dangerous

things will change

the ai/LLM regulation must start and reside between the ears of the User.

this concept is foreign, if not the antithesis to the entire education, therapy, business and all other norms/narratives.

this cannot be approached like other past tech - from scratches on the cave wall, to radio, TV, and the interwebs, societies have regulated/manipulated/caged/destroyed innovation all in the name of 'safety' and the general good.

this will not work with AGi and whatever comes next.

the best way is to teach humans how to be more human than Ai

via

we all have our own Ai.

maybe we should get back to teaching how to think

instead of

what to think

What about books or the internet? A person could check out several books from a library or browse the internet to get advice. They could go on reddit and accept advice from anyone who happens to be interested in providing it whether that person is qualified or not.

You protect people from their own poor judgment. Safety isn't free. It requires the removal of autonomy in order to implement "protections" for people who don't even want it. If someone is asking a chatbot for financial advice they are doing it because that is what they want to do. Who are you to come in and say that they shouldn't be allowed to do so for their own "protection".

This would be a violation of human rights and autonomy. Remember the road to hell is paved with good intentions.